Storage Spaces is a component available in Windows 2012. Microsoft has used this technique in their Microsoft Analytics Platform System or previously named SQL Server 2012 Parallel Data Warehouse.

But as I will show they got stuck in the 'old way of thinking' which will only work (slightly?) better if we live in a perfect world, were all data is requested at the same time. In a lot of cases this is not so....

The hardware behind Microsoft Analytics Platform System

When looking closer at the hardware the Hewlett and Packard (HP) solution based on the DL360 Gen8, which has 2 PCIe slots, is equipped with one H221 SAS controller with two 4x6 Gb/s ports and one Melanox Infini Band 56 Gb/s controller. In the HP solution the JBOD is divided in two: every node manages 32 disks.

Dell addresses the solution with the PowerEdge R620 which has maximum 3 PCIe slots but 2 JBODs so in order to address all 102 disks in the JBOD's they need to do the same as HP namely one SAS 4x6 Gb/s connections per JBOD. The Quanta solution resembles the Dell solution with 3 nodes and 2 JBOD's.

The software architecture of Microsoft Analytics Platform System

Because the solution needs to be high available and therefore be capable to move from one defective node to another the whole SQL Server environment is visualised in a Windows 2012 VM.

In order to provide Cluster Shared Volumes access to the JBOD's every node has also a Windows 2012 storage cluster VM up and running. If a server controller fails the node uses the storage services provided by the Storage VM on the second or in case of Dell and Quanta one of the other nodes. But this VM need to be accessed by the Infini Band network. This means that in case of a node failure performance of both nodes will be reduced to 50% in case of an HP solution and by 33% in case of a Dell/Quanta solution.

The storage architecture of Microsoft Analytics Platform System

The way storage is organised is pretty standard: every node addresses 16 mirrored data volumes build on several disks in the JBOD(s) and there is a general tempdb and LOG volume. This resembles more or less the traditional RAID1+0 or RAID10 solution.

If you have read my other post on Storage Spaces I have my thoughts about this storage architecture.

If your data is not so well organised for your query and for some reason you hit only one or a few volumes you also hit the physical limits of the mirror or RAID1+0 or RAID10 architecture. This is certainly the case when your query collects all data and writes them in the tempdb and then performs transformations in the tempdb on it.

But wait: there is a better way to use Storage Spaces with SQL Server

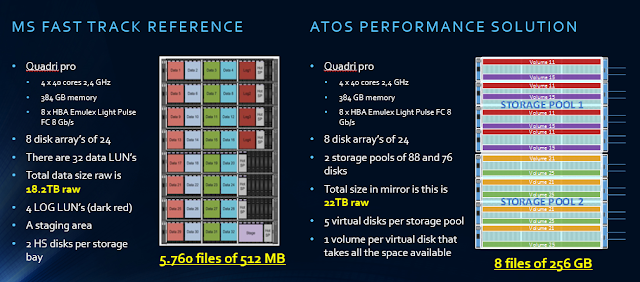

My tests on existing Microsoft Fast Track reference architectures provided a 50% performance improvement using the same hardware and can handle way more simultaneous users.

My newly developed ATOS Performance Solutions based on SQL Server 2014 with Windows 2012R2 Storage Spaces show that we are able serve at least a 100 users simultaneously with a reasonable good performance (from 12s to 90s).

How did we do it?

Like I explained in my previous post we created one storage space for all the hard disks (80) and one for all the SSD disks (4 per JBOD) in the 4 JBOD's (4). I created 8 virtual disks on the HDD storage pool using all the available disks and 2 virtual disks on the SSD storage pool.

All virtual disks were created with 64 KB interleave size and the volumes formatted with the same 64KB block size. The data files were placed on the 8 HDD volumes and the tempdb files (12) and the LOG files were placed on a separate SSD volume.

And the performance?

What we saw was when we used only one volume for sequential reads of 512KB we hit already 4.3 GB/s of I/O which is impossible to get when using a traditional RAID10 solution. Using more and more volumes we got up to 8.7 GB/s using only 4 JBOD's.

With a traditional Fast Track reference solution we obtained 9.6 GB/s with 8 disk arrays and 32 LUN's or volumes.

Loading the data was also way easier: we used only 4 parallel loading tasks in SQL Server Integration Services and obtained an effective data load rate of 700 MB/hour.

We had obtained actually more than 1 TB/hour but we lost 300 MB/hour on the fact that since SQL Server 2012 data is first loaded in the tempdb before it is transferred to the actual table. This was all visible in the performance tools we used.

Using a Microsoft Fast Track Reference architecture way harder to obtain this value and the solution used is way harder to maintain in a production environment.

It is all about latency!

The most important about this new architecture with Storage Spaces is that the latency was drastically reduced. A Fast Track reference architecture will provide the results with a 24ms+ latency and our ATOS Performance Solutions based on SQL Server 2014 was capable to return the data much faster with less processors (think about the license costs reduction!) and therefore could serve way more users (easily more than a 100 simultaneously in fact).

The future architecture of Microsoft Analytics Platform System

I don't think that Microsoft Analytics Platform System as it exist now will continue: it is just too complex. Next to that it uses old 6 Gb/s storage hardware when everywhere there are 12 Gb/s solutions available and some JBOD's do not even support the use of SSD's (HP). Not very flexible then.

A new and more hybrid solution will be based on a Windows Storage Server solution with Cluster Shared Volumes. Probably one Cluster per rack. The storage cluster solution means that the servers used need to have more PCI slots to provide a resilient solution.

On top of that will be running the 8 (HP) or 9 (Dell/Quanta) SQL Server compute nodes and because the CSV are highly available by design (maybe using two Melinox 56 Gb/s Infini Band cards per node) and a lower latency and a higher performance on the tempdb overall performance off the Microsoft Analytics Platform System increases beyond expectations.

But as I will show they got stuck in the 'old way of thinking' which will only work (slightly?) better if we live in a perfect world, were all data is requested at the same time. In a lot of cases this is not so....

The hardware behind Microsoft Analytics Platform System

When looking closer at the hardware the Hewlett and Packard (HP) solution based on the DL360 Gen8, which has 2 PCIe slots, is equipped with one H221 SAS controller with two 4x6 Gb/s ports and one Melanox Infini Band 56 Gb/s controller. In the HP solution the JBOD is divided in two: every node manages 32 disks.

Dell addresses the solution with the PowerEdge R620 which has maximum 3 PCIe slots but 2 JBODs so in order to address all 102 disks in the JBOD's they need to do the same as HP namely one SAS 4x6 Gb/s connections per JBOD. The Quanta solution resembles the Dell solution with 3 nodes and 2 JBOD's.

The software architecture of Microsoft Analytics Platform System

Because the solution needs to be high available and therefore be capable to move from one defective node to another the whole SQL Server environment is visualised in a Windows 2012 VM.

In order to provide Cluster Shared Volumes access to the JBOD's every node has also a Windows 2012 storage cluster VM up and running. If a server controller fails the node uses the storage services provided by the Storage VM on the second or in case of Dell and Quanta one of the other nodes. But this VM need to be accessed by the Infini Band network. This means that in case of a node failure performance of both nodes will be reduced to 50% in case of an HP solution and by 33% in case of a Dell/Quanta solution.

The storage architecture of Microsoft Analytics Platform System

The way storage is organised is pretty standard: every node addresses 16 mirrored data volumes build on several disks in the JBOD(s) and there is a general tempdb and LOG volume. This resembles more or less the traditional RAID1+0 or RAID10 solution.

If you have read my other post on Storage Spaces I have my thoughts about this storage architecture.

If your data is not so well organised for your query and for some reason you hit only one or a few volumes you also hit the physical limits of the mirror or RAID1+0 or RAID10 architecture. This is certainly the case when your query collects all data and writes them in the tempdb and then performs transformations in the tempdb on it.

But wait: there is a better way to use Storage Spaces with SQL Server

My tests on existing Microsoft Fast Track reference architectures provided a 50% performance improvement using the same hardware and can handle way more simultaneous users.

My newly developed ATOS Performance Solutions based on SQL Server 2014 with Windows 2012R2 Storage Spaces show that we are able serve at least a 100 users simultaneously with a reasonable good performance (from 12s to 90s).

How did we do it?

Like I explained in my previous post we created one storage space for all the hard disks (80) and one for all the SSD disks (4 per JBOD) in the 4 JBOD's (4). I created 8 virtual disks on the HDD storage pool using all the available disks and 2 virtual disks on the SSD storage pool.

All virtual disks were created with 64 KB interleave size and the volumes formatted with the same 64KB block size. The data files were placed on the 8 HDD volumes and the tempdb files (12) and the LOG files were placed on a separate SSD volume.

And the performance?

What we saw was when we used only one volume for sequential reads of 512KB we hit already 4.3 GB/s of I/O which is impossible to get when using a traditional RAID10 solution. Using more and more volumes we got up to 8.7 GB/s using only 4 JBOD's.

With a traditional Fast Track reference solution we obtained 9.6 GB/s with 8 disk arrays and 32 LUN's or volumes.

Loading the data was also way easier: we used only 4 parallel loading tasks in SQL Server Integration Services and obtained an effective data load rate of 700 MB/hour.

We had obtained actually more than 1 TB/hour but we lost 300 MB/hour on the fact that since SQL Server 2012 data is first loaded in the tempdb before it is transferred to the actual table. This was all visible in the performance tools we used.

Using a Microsoft Fast Track Reference architecture way harder to obtain this value and the solution used is way harder to maintain in a production environment.

It is all about latency!

The most important about this new architecture with Storage Spaces is that the latency was drastically reduced. A Fast Track reference architecture will provide the results with a 24ms+ latency and our ATOS Performance Solutions based on SQL Server 2014 was capable to return the data much faster with less processors (think about the license costs reduction!) and therefore could serve way more users (easily more than a 100 simultaneously in fact).

The future architecture of Microsoft Analytics Platform System

I don't think that Microsoft Analytics Platform System as it exist now will continue: it is just too complex. Next to that it uses old 6 Gb/s storage hardware when everywhere there are 12 Gb/s solutions available and some JBOD's do not even support the use of SSD's (HP). Not very flexible then.

A new and more hybrid solution will be based on a Windows Storage Server solution with Cluster Shared Volumes. Probably one Cluster per rack. The storage cluster solution means that the servers used need to have more PCI slots to provide a resilient solution.

On top of that will be running the 8 (HP) or 9 (Dell/Quanta) SQL Server compute nodes and because the CSV are highly available by design (maybe using two Melinox 56 Gb/s Infini Band cards per node) and a lower latency and a higher performance on the tempdb overall performance off the Microsoft Analytics Platform System increases beyond expectations.

Comments

Post a Comment